Orem, Utah — May 8, 2026

AI experts from UVU turned a room full of veteran auditors into a hands-on AI workshop, and sent them home with a blueprint.

Fifty-two internal auditors from across the Utah System of Higher Education gathered at Utah Valley University on May 5 expecting a briefing. What they received was a masterclass in practical artificial intelligence, delivered by two university interns young enough to have grown up alongside the technology they were teaching.

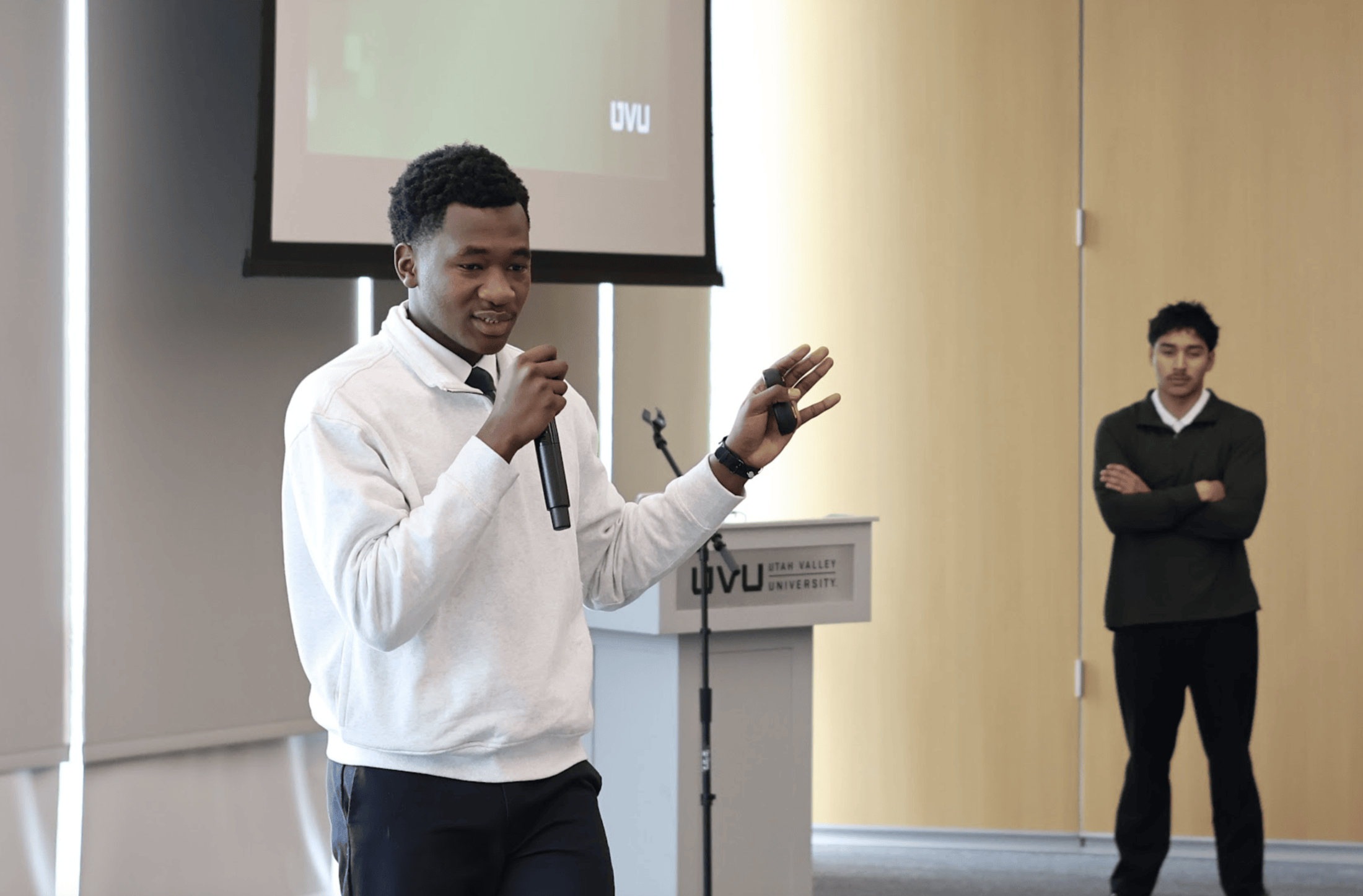

Mohamed Maiga and Ivan Diaz spent roughly an hour demonstrating not just what AI can do in audit environments, but specifically how to build, deploy, refine, and validate AI agents using tools most professionals already have access to. By the time they finished, the auditors in the room, some with thirty years of experience, had a working blueprint for rethinking how they do their jobs.

The Human Argument Comes First

Recent UVU graduate, Mohamed Maiga, opened the session by making a case that had nothing to do with software.

Drawing on a phrase from outgoing UVU President Astrid Tuminez, "We are human beings, not human doings," he argued that audit-heavy professional environments trap their best people in repetitive manual tasks that crowd out the judgment, collaboration, and relationship-building that organizations actually need most.

"To allow collaboration, you must have time for the human," Maiga told the room. "That's why I love AI. It gives us that power."

The argument carried particular weight coming from Maiga. Originally from Mali, he moved to Utah at seventeen in pursuit of education and opportunity, earned a cybersecurity degree from UVU earlier this month, served as president of the International Students Council, and interned for the vice president of student affairs. He did this all while developing a focused expertise in AI-driven automation. He speaks English, French, and Bambara. His conviction that technology should empower people rather than replace them is not a corporate talking point. It is a personal philosophy, built across continents.

Before presenting a single tool, he made one request of the room: throughout the presentation, identify the most manual, most repetitive process in your own workflow. Because that, he said, is exactly where AI belongs.

A Procurement Card Problem the Whole Room Recognized

Maiga grounded the presentation quickly in a case study that landed with the audience. When he asked how many auditors had encountered procurement card misuse in their work, nearly every hand went up. When he asked whether they believed most of that misuse was intentional, most shook their heads.

That gap, between the complexity of institutional policy and the good intentions of people trying to follow it, is precisely where the Kahlert AI Institute saw an opportunity.

UVU's procurement card guidelines span hundreds of pages. For every purchase, employees must determine whether the expense is permitted under policy. The rules are detailed, spread across multiple documents, and often interpreted inconsistently.

The stakes are high: these are public funds. Questions like Can I buy cake for a VP's retirement? Are work anniversary gifts covered? may seem minor, but they generate constant friction. That friction compounds into the kind of unintentional misuse that lands on auditors' desks.

The solution built at the AI Institute: a custom chatbot trained directly on UVU's procurement documents. Employees can now ask plain-language questions and receive clear, policy-grounded answers in seconds: no document searches, no supervisor calls, no guesswork. The project was developed by Tyler Small, Senior Director of the Kahlert Applied AI Institute, who fed the agent the relevant policy files, wrote detailed instructions defining what the agent should and should not do, and selected a model capable of reasoning through nuanced policy language.

"There's a lot of negligence, but not fraud," Maiga said. "People don't want to read the rules because they have other things to do. This solves that."

Four Steps to Building Your Own Agent

From the case study, Maiga walked the auditors through a framework for building their own AI agents, deliberately simple, deliberately accessible.

He started by clearing away the fear. An "agent," he explained, is simply any intelligence that performs work for you, whether automatically or on request. Every time someone uses ChatGPT, Claude, or Gemini, they are already working with an agent. A custom one is just a focused version, trained on specific documents, given specific instructions, built for a specific purpose.

The framework breaks into four steps:

Step 1: Name and describe the agent. Define its purpose in plain language. What does it do? For whom? What problem does it solve? Clarity here sets the foundation for everything that follows.

Step 2: Write the instructions. This, Maiga emphasized repeatedly, is the most important step in the entire process. Instructions must specify what the agent should do and what it explicitly should not do. Use plain English. Be specific. The less ambiguous the language, the more reliable the output. For anyone uncertain where to begin, he offered a practical suggestion: use AI to help draft the instructions themselves. "You want to be directing the AI," he said. "You don't want to get lost in the process."

Step 3: Upload documents directly. A common mistake, Maiga warned, is feeding AI a link and asking it to search for information online. This introduces uncertainty and increases the risk of inaccurate outputs. Text documents uploaded directly give the model exactly what it needs, with no ambiguity about the source.

Step 4: Select a thinking model. Not all AI models perform equally on complex tasks. For high-stakes applications like internal audit, where an incorrect output could inform a flawed finding, choosing a model with genuine reasoning capabilities matters. Maiga pointed to OpenAI's recent releases as benchmarks, and cautioned against locking into any single tool as the field continues to evolve rapidly.

He closed the framework with a reality check: creating the agent is roughly five percent of the work. The remaining ninety-five percent is iteration — testing outputs, adjusting instructions, and refining the agent's performance over time. Like any professional skill, it improves with deliberate practice.

Ivan Diaz: A Live Demonstration of What "Better" Looks Like

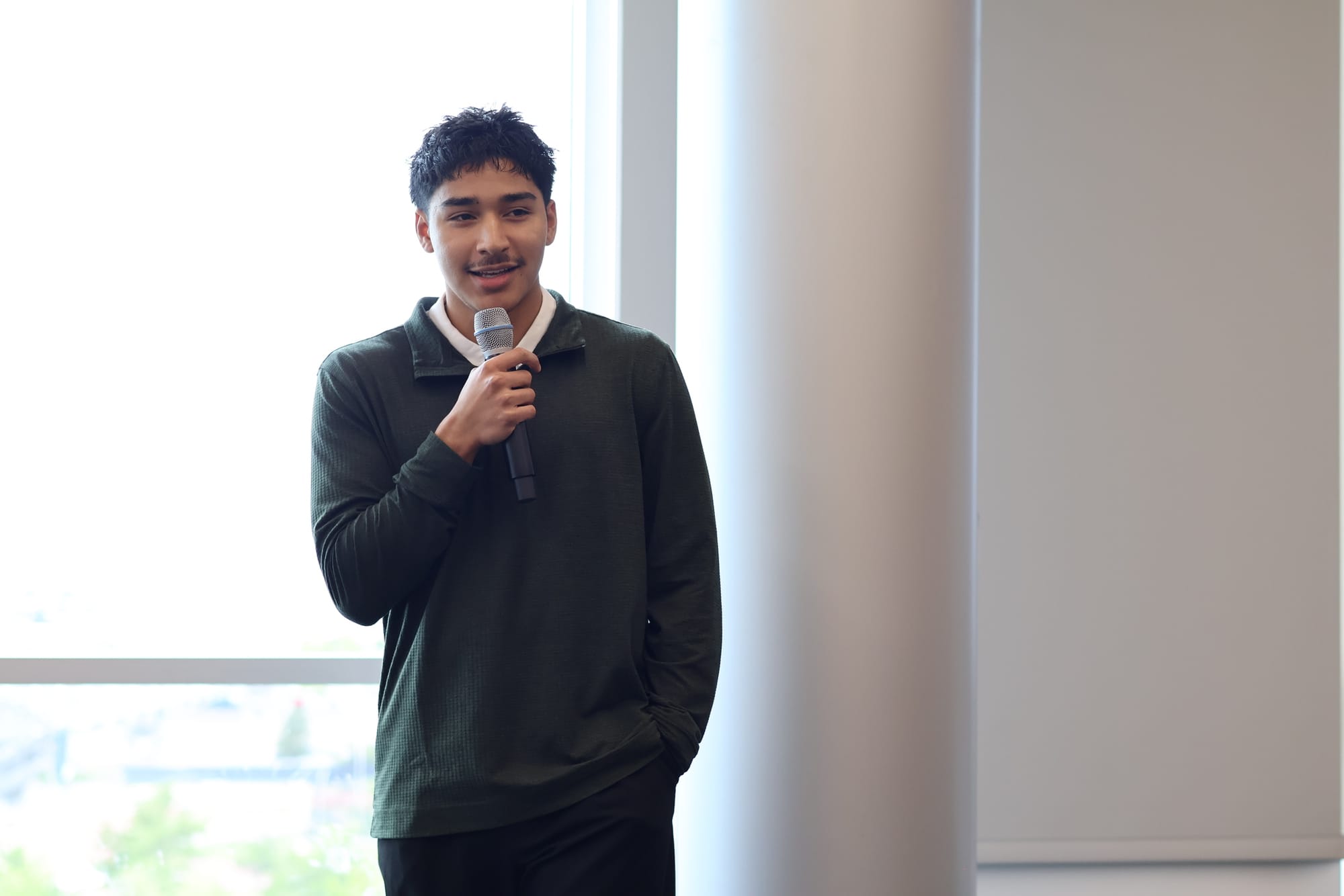

Where Mohamed Maiga built the framework, Ivan Diaz showed it working in real time.

Diaz is an accounting student at UVU with his sights set on a career in public accounting. His project addressed a pain point familiar to anyone who works with institutional financial data: taking raw expense information from multiple departments, each with several funding indexes, spanning multiple fiscal years, and producing a clean, formatted, readable report. Done manually, it consumed hours. So he automated it.

Diaz built his agent in Microsoft Copilot, starting with the basic version available free to UVU students and employees. His development process became its own case study in iterative AI improvement, and an honest account of how the best results rarely come from the first attempt.

He began using Copilot's built-in tools to generate initial prompts but quickly hit the ceiling of the platform's default model. Rather than stopping there, Diaz used Gemini Pro to refine his prompts, iterating over one to two weeks and feeding improved versions back into Copilot. When he discovered that Copilot allowed users to switch the underlying model powering the agent, he made the move to OpenAI's GPT 5.4. The improvement was immediate and significant. Output reliability increased sharply, and Diaz discontinued his use of external refinement tools entirely, working instead directly with the agent to diagnose errors and sharpen instructions on the fly.

"The right model changes everything," he told the auditors. He recommended GPT 5.4 specifically for structured, complex data tasks, and encouraged the room to experiment rather than default to whatever model loads automatically.

One practical note for auditors considering similar projects: Copilot's basic version accepts up to three file inputs per session. The premium version supports up to twenty — a meaningful difference for anyone working across multiple departments, fiscal years, or funding indexes simultaneously. Diaz plans to demonstrate the premium version's full capacity at an upcoming UVU conference.

The Step That Makes AI Trustworthy: Validation

The most technically rigorous element of Diaz's presentation was also, perhaps, its most important for an audience of auditors: what happens after the agent runs.

Diaz developed what he calls a run meta sheet. It is a structured validation log that records, for every agent operation, the timestamp, the files used, the file name, the fiscal year, the index, and the number of rows parsed from the source data. After each run, the row count in the meta sheet is cross-referenced against the original CSV files to confirm the agent processed exactly the data it was supposed to process.

In his live demonstration, Diaz showed the agent had parsed 524 rows from the source file. He pulled up the original CSV. The count matched precisely.

"Validating AI outputs is crucial for forming reliable opinions," Diaz stated. "If you can't verify what the agent did, you can't trust what it produced."

For a room of internal auditors, professionals whose entire discipline is built on documented, verifiable evidence, the validation framework was perhaps the session's most resonant idea. AI is not a black box that produces answers to be accepted at face value. It is a tool whose outputs can and must be tested, documented, and confirmed.

The run meta sheet approach carries an additional benefit: transparency. The agent displays the Python code it generates during data processing. Users do not need to know Python to work with the agent effectively, but for those who want to understand precisely what the system did, or need to document the methodology for audit purposes, the visibility is there.

Diaz also addressed data security directly. During the period when he used Gemini and Claude for prompt refinement, no confidential or sensitive information was shared with those external services. The prompts contained only instructions — no files, no financial records, no personally identifiable information. For institutions with strict data governance requirements, the development approach was designed with compliance in mind from the start.

AI Literacy Is No Longer Optional

Neither Maigi nor Diaz stated it quite so plainly, but the subtext running through the entire session was clear: for internal auditors, AI literacy is becoming a core professional competency, not a supplementary skill, not a pilot program, but a fundamental capability with direct implications for the quality and efficiency of audit work.

"There's a real need in this profession for AI literacy," Diaz said. "Being able to understand it, run it, and use it to work more efficiently."

Maiga framed it from the other direction, returning to the idea that had opened the session: AI is most powerful not when it replaces professional judgment, but when it removes the manual burden that prevents professionals from exercising that judgment in the first place.

"The relationships you build are what matter," he said. "AI gives you the time to build them."

For fifty-two auditors who drove from across the state of Utah to be in that room, the message was the same: the manual work piling up on your desk has a solution. And two UVU students just showed you how to build it.

GenAI Guidelines for Auditors

The following guidelines were presented by the Mohamed Maiga and Ivan Diaz of the Kahlert Applied AI Institute to help optimize interactions with generative AI and integrate automated agents into manual audit workflows:

- Save and Manage Agents: Save scripts externally for reuse and enhancement.

- Prioritize Input Quality: Output quality depends directly on instruction quality.

- Set Strict Boundaries: Tell the AI not to respond if it cannot meet requirements.

- Request Verification: Always ask for sources, page numbers, and levels of certainty.

- Use Natural Language: Speak to the AI like a human to improve comprehension.

- Define Purpose: Clearly state the "why" and provide relevant context.

- Embrace Iteration: Expect to fine-tune and go through several versions.

- Human Oversight: Always verify outputs; AI handles errors, but humans ensure accuracy.

- Two-Way Assessment: Evaluate AI potential both before and after completing a task.

Mohamed Maiga (recent UVU graduate) and Ivan Diaz (Accounting Major) presented an AI Agent Workshop for 52 USHE Auditors, as part of an audit conference at UVU on May 5, 2026. UVU AI students will provide a follow-up demonstration using Copilot's premium version — capable of processing up to twenty files simultaneously — at upcoming UVU conference.

Learn more about the Kahlert Applied AI Institute here.