A UVU student's AI-powered device could end a painful — and costly — diagnostic procedure for joint replacement patients.

Orem, Utah — May 6, 2026

The Problem No One Wanted to Talk About

When someone receives a knee implant, they begin a quiet waiting game. Infection is a persistent risk — not dramatically common, but catastrophic when it arrives. And for years, the only reliable way to know whether bacterial biofilm had taken hold on the implant was to take an invasive sample from an already sensitive injury. If infection was found, the fix was straightforward: antibiotics. But confirming the presence of infection required surgery. The aggressive procedure was the diagnosis.

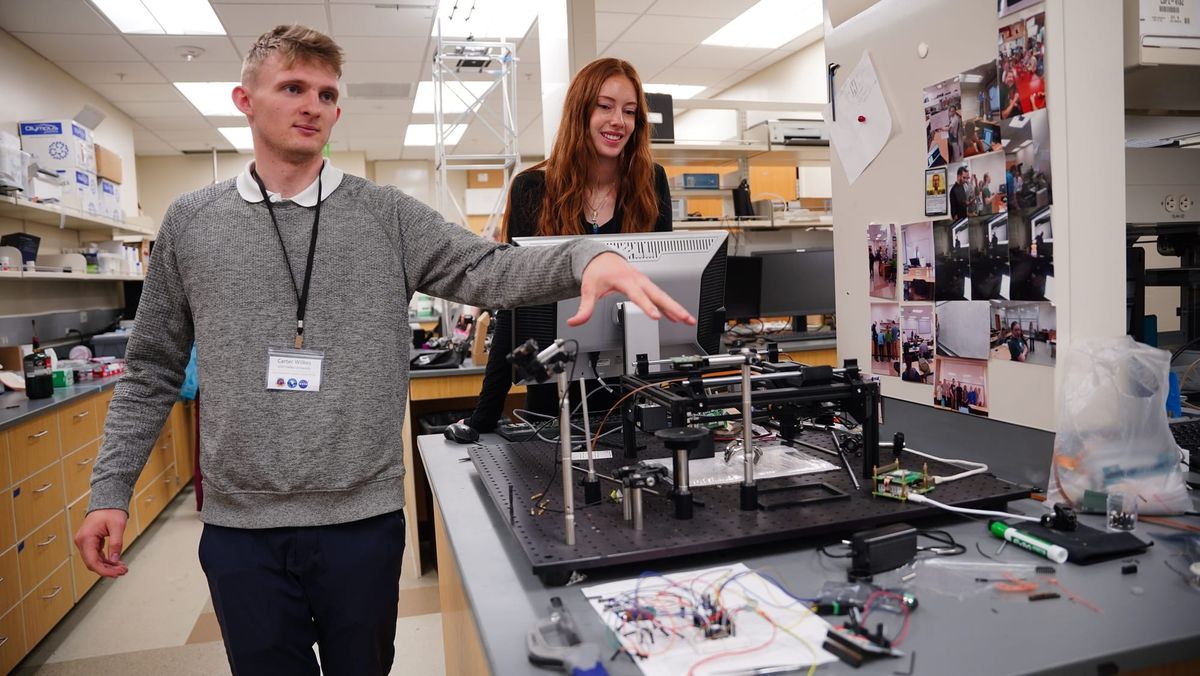

Carter Wilkes, a Physics undergraduate at Utah Valley University, is working to make that invasive step unnecessary. Working in UVU's Center for Imaging and Biophotonics Experiments Advancing Medicine — known as CIBEAM — under faculty advisor Dr. Vern Hart, Wilkes and his team have built a device called DIFFRAX (Diffraction Imaging for Film Formation and Reflective Anomaly Extraction). It uses a red laser and a custom-trained neural network to detect bacterial infection through the skin, without cutting or scraping, and in about five minutes.

DIFFRAX isn't the lab's first attempt to reimagine how AI and imaging can detect what was previously invisible. Wilkes has previously worked on RUTID, a rapid UTI detection device, as part of CIBEAM's broader focus on using optics and imaging to make diagnostics faster and less invasive. The knee implant project represents the lab's most clinically urgent application yet.

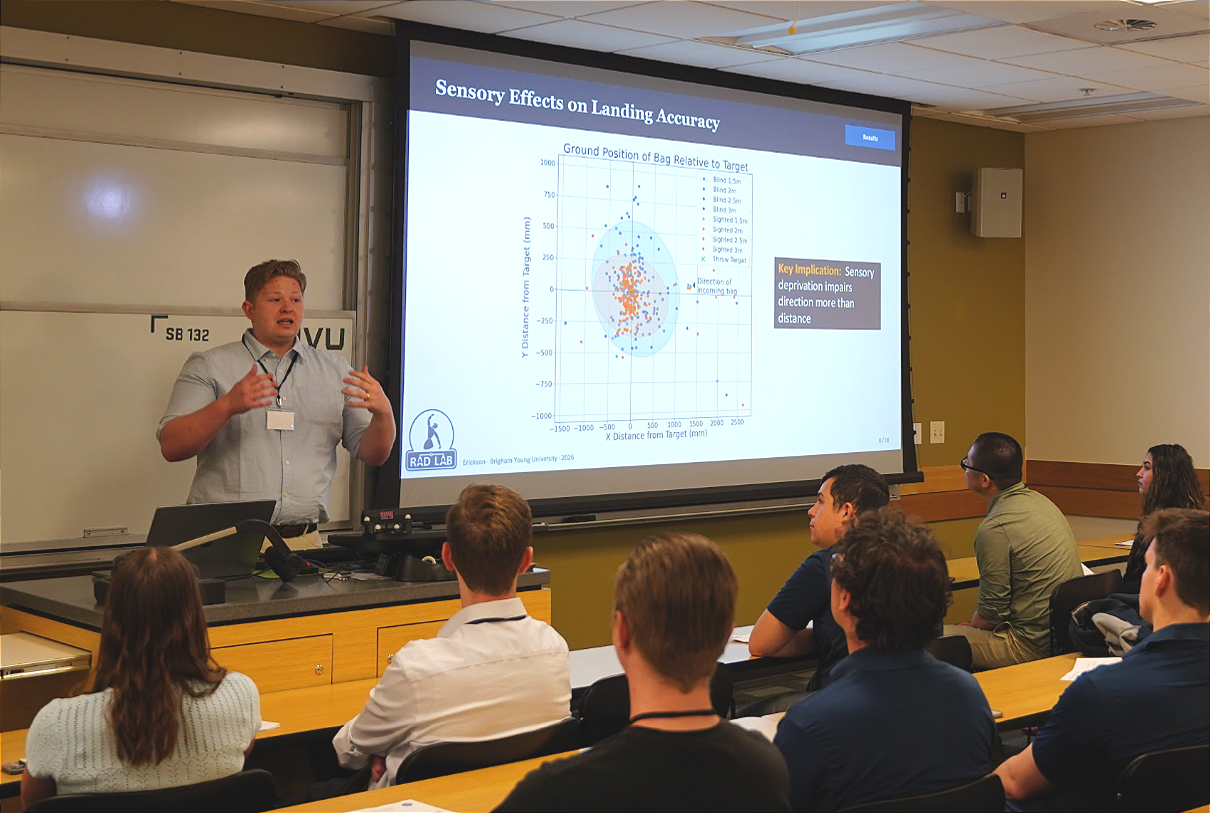

Wilkes presented his research on May 4, 2026, at the 32nd Annual Utah NASA Space Grant Consortium Fellowship Symposium, held at Utah Valley University's Science Building in Orem. The event brought together undergraduate and graduate students, faculty, and institutional partners from universities across the state — including BYU, Utah State, the University of Utah, Utah Tech, Snow College, and Salt Lake Community College — to present research spanning aerospace, biomedical innovation, robotics, and engineering.

Opening the morning's proceedings, Dr. Daniel Horns, Dean of UVU's College of Science, reminded students that the stakes of the day were higher than a line on a résumé. "The fact that you're doing the work that got you here to present a poster or an oral talk means that you're doing research beyond the classroom," he said. "You're learning skills and knowledge that can't be gained in the classroom." Wilkes is doing exactly that through his novel scanning technology.

Built From Parts, Built for Patients

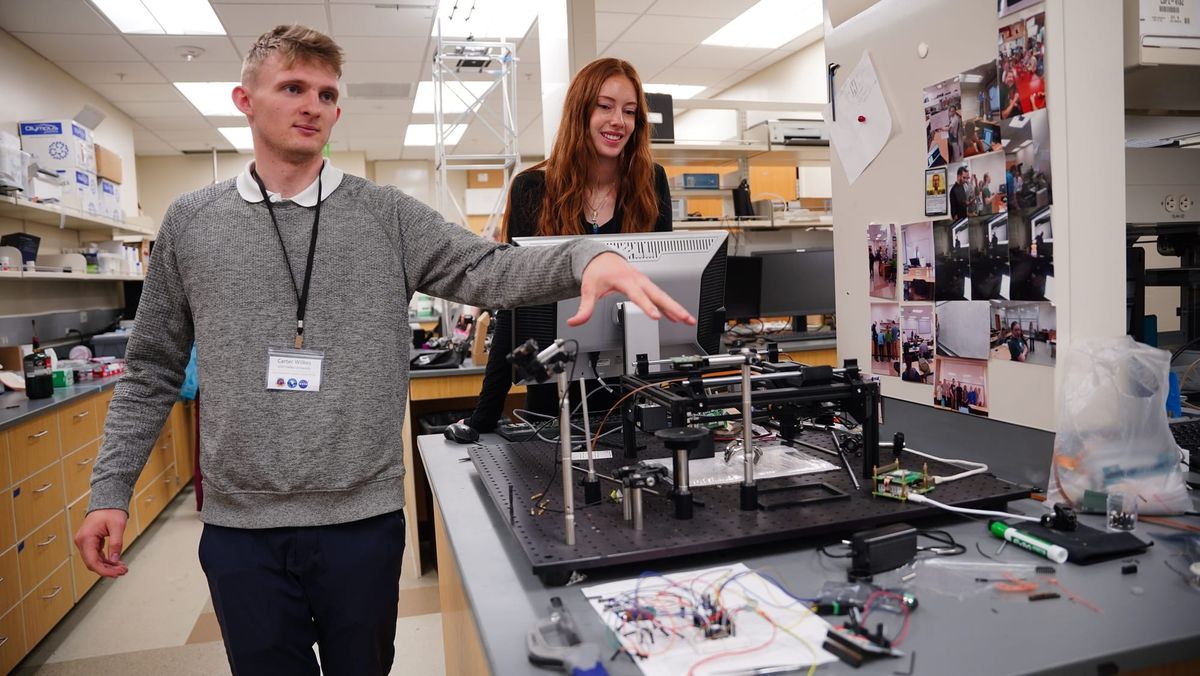

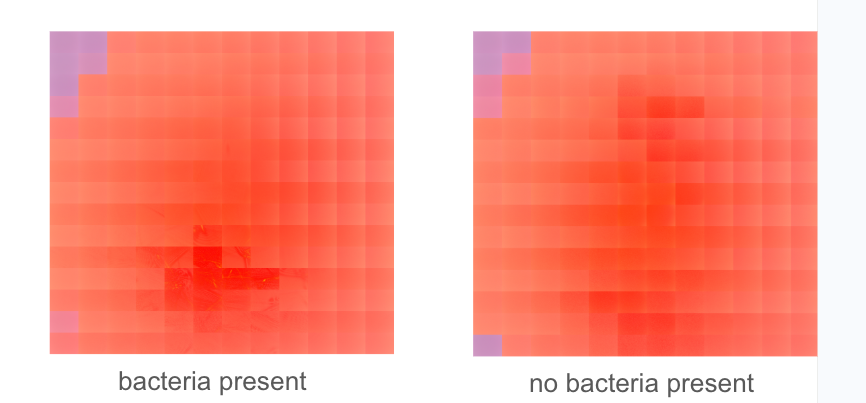

The DIFFRAX scanner doesn't look like medical equipment. Resting on a vibration-dampening optical table in UVU's CIBEAM lab, it resembles what it partly is: a heavily modified 3D printer. The open black aluminum frame, the dual steel rod rails, the belt-and-carriage movement system — the machine's skeleton is unmistakably borrowed from consumer desktop fabrication technology. But where a 3D printer deposits material, DIFFRAX fires a 635nm red diode laser across a scan bed, methodically mapping the surface beneath it point by point.

At the center of the scan bed, taped carefully to a sheet of paper with hand-drawn alignment markings, sits a chrome knee implant. It is the machine's only patient — for now. The laser travels down, strikes the implant's reflective surface, and bounces back to a CMOS beam profiler mounted above. An orange ribbon cable carries that signal to the device's brain: a Raspberry Pi 4, the same $99 computer used in school science projects and hobbyist electronics kits worldwide. Stitching code assembles each reflected data point into a complete diffraction map of the implant surface.

The entire bill of parts totals less than $400.

In the lab, Wilkes walked through the process with the ease of someone who has operated it many times. The camera mounted at the bottom of the carriage detects photon scattering patterns as the laser fires at the implant below. Two stepper motors drive the carriage across its x and y axes, rastering back and forth in a grid, "literally just like a 3D printer," Wilkes explained. The system needs only 136 images to generate a result with a reliable confidence interval. When the scan is complete, the device displays its findings directly on screen, telling the operator — in Wilkes's words — "with what accuracy confidence interval you have a biofilm." For conferences and eventual clinical use, the entire system runs portably: connect it, press Scan, and wait.

How DIFFRAX Works: Light, Reflection, and a Trained Eye

The physics behind DIFFRAX begins with a property of red light that Wilkes describes with characteristic directness: "You can actually shoot a red laser into skin, and it can get about eight millimeters in before it completely diffuses." For a knee implant positioned just beneath the skin's surface, that penetration depth is precisely what's needed. The laser reaches the implant and reflects back — carrying with it information about what it passed through.

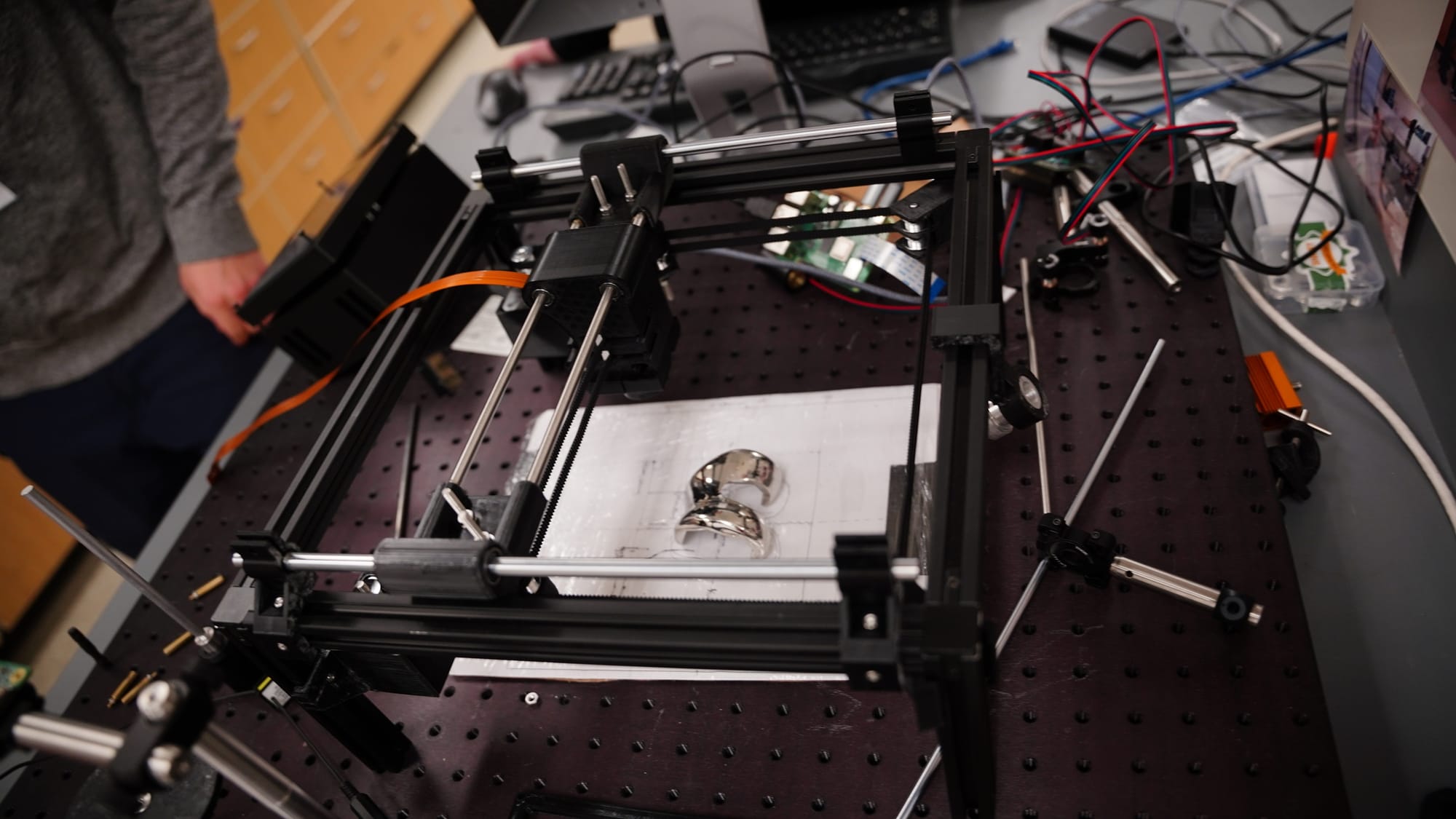

That reflected signal becomes a diffraction map, a pixelated grid image recording how light scattered across the implant surface. To the untrained eye, a clean map and an infected one look nearly identical: abstract arrangements of light and dark squares with no obvious pattern. That invisibility is precisely the problem DIFFRAX was built to solve.

"We have trained a neural network to see the minute differences between a no-bacteria sample and a bacteria sample," Wilkes explained. The system has been trained on thousands of images, learning to detect patterns invisible to human perception — subtle variations in how light scatters and reflects when biofilm is present. In the infected diffraction maps, a dark cross-shaped anomaly emerges at the center, absent in clean samples. A human analyst would likely miss it. The neural network does not. "We wouldn't be able to use this without AI," Wilkes said plainly.

The results so far are striking. In testing, the system has achieved 99.2 percent accuracy in distinguishing infected from uninfected samples. The patient experience, should the device reach clinical deployment, would be a five-minute scan — non-invasive, repeatable, and schedulable as a routine follow-up. Wilkes envisions patients returning "once a week for three months" after surgery, catching problems early rather than waiting for symptoms to force a more drastic response.

What Early Detection Actually Means

The financial stakes of the problem Wilkes is addressing are not abstract. When bacterial infection in a joint implant goes undetected until it becomes symptomatic, the result is almost always additional surgery. "Those revision surgeries can cost anywhere from $50,000 to $100,000," Wilkes noted. A five-minute weekly scan from a modest machine built from $400 in parts, by comparison, is a rounding error — and that contrast is not lost on the team.

But the more significant cost is the one that doesn't appear on a bill. Patients who develop serious post-implant infections often face months of additional treatment, prolonged immobility, and in severe cases, implant removal. For patients who have already endured major surgery, early detection through a noninvasive tool like DIFFRAX wouldn't just save money—it could reshape the path to recovery.

The project is labeled "Bench to Bedside" on the DIFFRAX poster, a deliberate framing that signals the team's intent to move the research beyond the lab. Biofilms, the poster notes, are responsible for over 60 percent of hospital-acquired infections, contributing to hundreds of thousands of implant-related infections annually in the U.S. The CIBEAM team, which includes more than a dozen student researchers alongside Dr. Hart, is building the evidence base that a future clinical or regulatory pathway would require.

Across the Symposium: Technology at the Edge of What's Known

The DIFFRAX project was the most clinically immediate of the day's presentations, but it was not alone in using computational tools to solve problems that previous methods couldn't reach.

Brigham Young University student Austin Erickson presented his research on a deceptively simple task: two people throwing an object together. His work, conducted under Dr. Marc Killpack, focused on how human coordination actually emerges in this scenario — and what it means for robotics. "Throwing is a really interesting task where the whole performance is determined by a single release event," Erickson explained. What he found was that effective coordination doesn't depend on timing or communication. "Coordination emerges through a sensing of motion, rather than synchronization and release." The implication for robotic systems is significant: rather than programming exact movements, future robots could be designed to respond dynamically to a partner's motion, reading it in real time the way humans instinctively do.

Braxton Wiggins, a graduate student at Utah State University, presented work on ultra-high-pressure combustion systems, specifically, designing a jet-stirred reactor capable of testing fuels at conditions that current computational models cannot reliably simulate. "Once you get to above the supercritical pressure points of these fuels, the computations get very complicated and intensive and don't exactly know if they're right," Wiggins explained. His physical testing apparatus exists to validate and correct those models, with direct implications for the efficiency of rocket engines operating at extreme conditions.

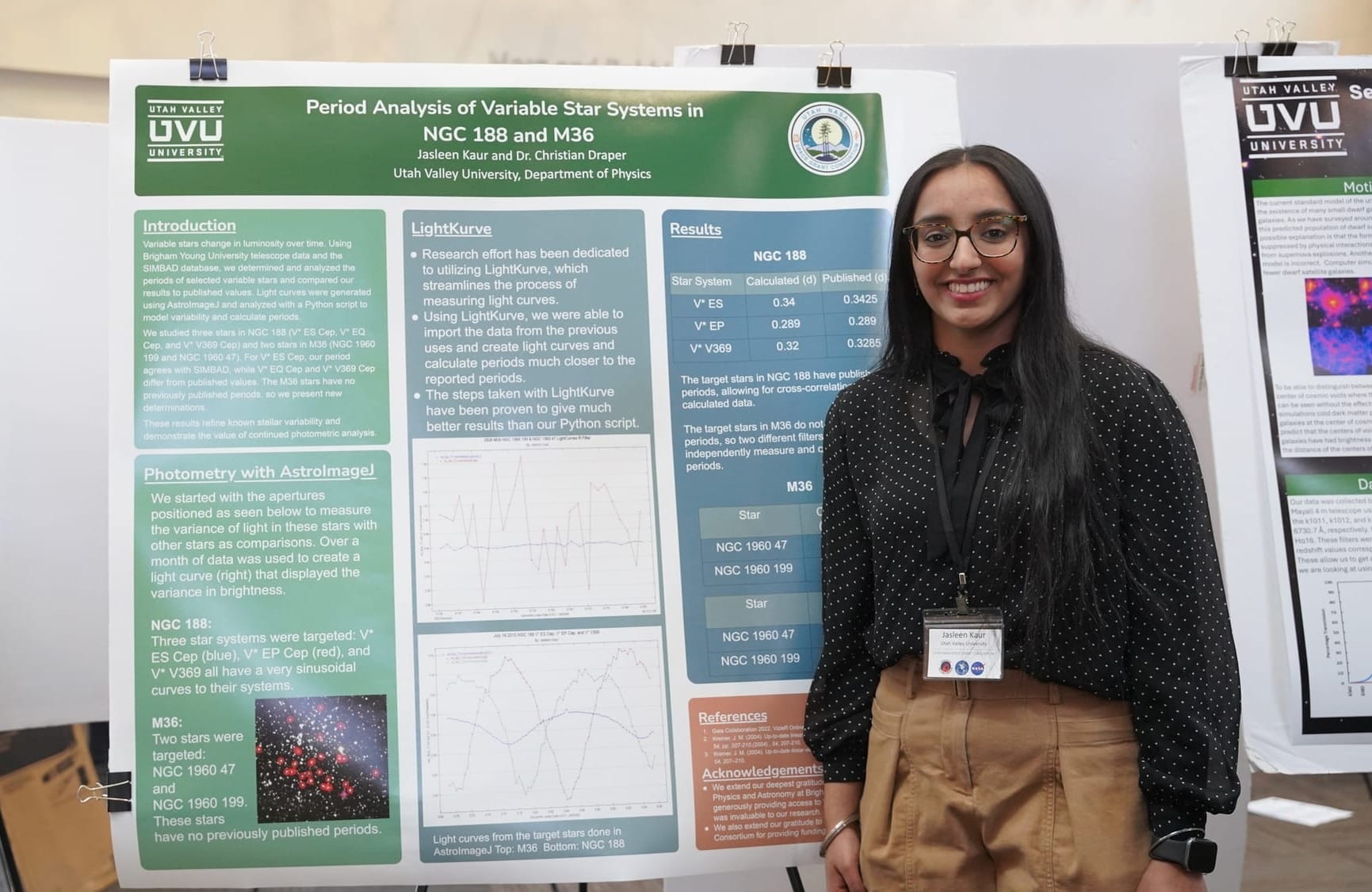

UVU student Jasleen Kaur brought a different scale of inquiry to the symposium's poster session — not the interior of a knee, but the interior of a galaxy. Working under Dr. Christian Draper in UVU's Department of Physics, Kaur is analyzing variable stars in two open star clusters, NGC 188 and M36, using telescope data from BYU's West Mountain Observatory processed through Python-based photometry tools. For M36, no published rotational period exists for either of the two stars she is studying; Kaur's calculations are original data. For NGC 188, where the last published measurements date to 2003, her work confirms whether those stars still behave as expected — a quieter but no less necessary contribution. The broader significance lies in what astronomers call standard candles: variable stars whose predictable behavior makes them reliable reference points for measuring distances across space. If those reference points drift and the data isn't updated, Kaur explained, everything calculated from them has to be revised — including galaxy-to-galaxy distance measurements built on decades of prior work.

Research Beyond the Classroom

What connected the day's most compelling presentations was not a shared discipline but a shared method: identify the point at which existing tools fail, then build something that works past that limit. For Wilkes, the limit was human perception — no eye, trained or untrained, can see bacterial biofilm through skin, nonetheless detect it with consistent accuracy using a laser combined with AI-enhanced images. For Erickson, it was the assumption that coordination requires communication. For Wiggins, it was the gap between what computational models predict and what actually happens at extreme pressure. For Kaur, it outdated data that could potentially affect the galaxy as we understand it.

In each case, the researchers didn't accept the limit as a boundary. They built instruments — physical, computational, or both — to see past it. In Wilkes's case, that instrument started as a broken 3D printer and a $15 computer, and became a device that may one day spare patients from surgery they never needed.

That disposition, Dr. Horns suggested in his opening remarks, is what distinguishes research from coursework. "You're learning skills and knowledge that can't be gained in the classroom," he told the assembled students. At a symposium where an undergraduate built a potential medical diagnostic tool from salvaged parts and an AI trained to see what human eyes cannot, the point was difficult to argue with.

Learn more about the NASA Space Grant Program at www.utahspacegrant.org.

This article is part of a series by the UVU Kahlert Applied AI Institute highlighting the real-world impact of AI. Stay tuned—there is more to come.